Six Generative Design Pitfalls to Avoid

Poor Specification, No Touching! and Biased Results

Autodesk Research is pioneering new forms of generative design today, and our products have more and more capabilities from suggesting amazing new architecture to helping designers create new virtual worlds in movies. But generative design is not easy, and it can draw criticism if certain pitfalls are not avoided. I’ve been lucky enough to be involved in this area since the early days. Here’s a few lessons I’ve learned over the years that might help new researchers aiming to create their own generative design systems.

One: Poor Specification

Some claim that it’s simply too hard to define the functionality of a design. You may as well create the design directly if specifying every element takes so long. Indeed, by the time you have fully specified it, haven’t you basically designed it? This was the argument I faced three decades ago and for some people this argument is still valid today. Here’s what I experienced…

My heart sank. I was attempting to persuade a panel of professors sitting around a large table that I should be permitted to do my proposed PhD project. The idea was to use a genetic algorithm to evolve novel designs from scratch. It was a tough sell for these professors of engineering.

“How can a computer make a design out of nothing?” the professor continued. “Why look at this table,” the professor tapped on the desk, “how do you define the functionality of a table without telling the computer the design?”

“I believe it can be done,” I argued. “I think we can define desirable functionality: it must be able to support objects above the ground; it must be light enough to move; it shouldn’t fall over. The desired functionalities do not describe what the solution looks like, they specify how to measure the performance of a solution, regardless of its shape.”

It was 1993 and the start of my PhD in generative design – or as I called it then: evolutionary design of solid objects. I’d been reading Richard Dawkins’s The Blind Watchmaker and other works and was sure my idea had merit. If natural evolution could create the myriad novel forms we see in nature, then why couldn’t a genetic algorithm inside a computer do the same thing? It was not an easy start, but I managed to convince the Dean of the School to let me try out my idea.

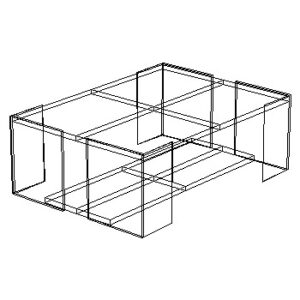

My solution to the problem of design specification was to make it modular. Each functionality was an optional module that added its own objective to the specification. The module might be as simple as a calculation for total mass or as complex as a virtual wind tunnel or ray tracer. (Today we might call these microservices.) With a broad enough repertoire of design specification modules, a user could quickly define radically new functionality with a few clicks. Using this approach, I went on to show for the first time that a computer could generate novel designs from scratch. The first design my system successfully evolved was a table.

The table I designed in the 90s and first described in the final chapter of my book “Evolutionary Design by Computers.”

Two: No Touching!

Sometimes, computer scientists can be a little stupid. We’re used to iterative algorithms – stochastic gradient descent, or a genetic algorithm. But when we make our generative design systems, we like to think of them as a single step process. We set up the system for a given design problem, run it, and let the designer interact at the end of the process by choosing an answer that they like. We believe in this so strongly that anything that intervenes in the middle feels dirty and inelegant. And yet when we look at the true success stories…

Although its evolved body looks relatively simple,” said Karl Sims, “if I were to print out the brain of the turtle on sheets of A4, it would stretch from one end of the auditorium to the other.” The audience murmured in astonishment.I was completely mesmerised.

It was my first ever academic conference and not only were there pioneers such as Sir John Maynard-Smith giving keynotes, but this Sims researcher had just done the impossible. Somehow Karl Sims had created a virtual world with realistic physics and evolved – from scratch – novel virtual creatures complete with novel brains that could run, jump and swim. They looked so organic in their movement that it was like watching a natural history documentary. In 1994 this was equivalent to seeing someone walk on water. The entire audience erupted at the end of the talk in a standing ovation.

Those were the heady days of genetic algorithm research – just as today we have the astonishing breakthroughs in machine learning that are equally exciting. The ground-breaking work I saw that day presented by Karl Sims went on to inspire hundreds of other research projects, and it continues to inspire researchers to this day. But I also remember one of the questions Karl answered after his talk. He was asked whether he ever had needed to help evolution along. He said that occasionally he would hand-pick one individual that he liked the look of and push it through to another stage. Oh! He cheated, I thought.

By 1996 I’d completed my PhD. I evolved novel designs for boat hulls, trains, chip heat sinks, cars… basically anything I could think of. Eventually I even built some of the tables I evolved. Look in my living room and you’ll see my evolved coffee table is still in use. But on one occasion I felt incredibly disappointed for I had also “cheated”. When my system evolved an aerodynamic car design in the computationally expensive “virtual wind tunnel” I’d written, its first attempt at a rear spoiler had been bizarre – two large rabbit ears stuck out of the back. So, I intervened, fixing all other parts of the design and asking the system to give me an alternative. Its next attempt was a nice clean rear spoiler.

Many years later, having worked with architects, designers, artists, and musicians, I now understand that intervening and assisting a generative design system is not cheating, despite my feeling at the time. It’s exactly how designers want to use the system. Design is not a single-step process. The design process is iterative, with designers frequently going back and modifying many aspects – including requirements which often change over time – as they explore options. Our generative design systems should therefore enable design input at any stage and enable all elements to be updated or fixed. We need to change how we think about our systems in order to build tools that suit the needs of designers.

Three: Biased Results

Everyone and everything has its own bias. We recognise tunes by our favourite singer because their voices are distinctive and their musical styles are recognisable. Our computer systems are the same. Sometimes a generative system may have accidental biases that are unwanted or inappropriate. These may be caused by training data for a ML system. They may be caused by the metrics used to measure success. They’re also frequently caused by the representation used to define the designs – each is better at representing different kinds of forms, and each makes it easier or harder to search when attempting to find better alternatives. But bias can also be exploited in a positive way…

“So, the user is presented with six alternatives and can choose their preference to be parents. Children mutate from the parents and the next generation is shown. Over many iterations, you can evolve your art,” explained William Latham. “We’re now working on a Mutator screensaver with a UI for the PC,” he continued, gesturing towards the computer screen which was displaying a weird swirling alien shape.

“Wow!” I said, feeling both awe and a hint of envy that Latham had advanced so far in evolutionary art. I’d come to visit the office in Soho as I did my research, for Latham’s book was an inspiration.

It was the first time I met William in his small Soho office, but I visited him many times over the years as his work continued to develop (and he moved to larger and more impressive spaces). William Latham was an artist who, with the help of IBM researcher Stephen Todd, had developed one of the first generative art systems in the late 1980s. His art was guided by the selection of the artist, but followed his artistic rules encoded in his generative representation. Even though anyone could use Mutator to make art, it was always recognisably Latham’s art, for the forms had the style and shapes that he first designed on paper as an art student, which were then codified into his representation.

This is the right way to approach bias. We cannot remove it, but we can steer it to work for us. More recently I helped create a project at Autodesk Research that looks at using Variational Autoencoders to learn representations for constrained problems such that the new latent representation is biased or focussed purely on the areas of the search space where constraints are satisfied. If everything that the representation can represent always satisfies the constraint, the problem becomes much easier to solve.

Bias towards desirable solutions is useful.

Read on for three additional generative design pitfalls to avoid in Part 2 of this post.

Peter Bentley is a Visiting Professor at Autodesk and Honorary Professor at University College London.

Get in touch

Have we piqued your interest? Get in touch if you’d like to learn more about Autodesk Research, our projects, people, and potential collaboration opportunities

Contact us