Cognitive Architecture as a Blueprint

Building Intelligent Systems Beyond Models

- A blueprint for intelligent systems: Cognitive architectures help engineers think beyond single AI models by treating intelligence as a system design problem, how perception, memory, reasoning, and action work together in a closed loop.

- Towards leveraging cognition as engineering solutions:

- Foundations: neuroscience levels, definition, core cognitive processes

- Representation + architecture types: perception, symbolic/emergent/hybrid, Soar/ACT-R/LIDA

- Engineering toolkit: feedback loops, Bayesian updating, modularity, self-stabilization

- Where to start: memory, generalization, reasoning, and the final blueprint

- Exploring engineering problems through the lens of cognition: The post suggests turning cognition into practical design patterns for building systems that are more adaptive, explainable, and robust than black-box model-only approaches.

AI models and agentic systems are already proving enormously useful: they can summarize, generate, plan, code, and accelerate work across domains at a scale and speed that would have been hard to imagine a few years ago. But their most visible successes can tempt us into treating benchmark scores and neatly defined metrics as the destination rather than a waypoint—optimizing for what’s easy to measure, not necessarily what’s robust, adaptable, or genuinely intelligent in the open world. A brain-inspired cognitive architecture reframes that goal. Instead of only better outputs, it emphasizes the underlying machinery of intelligence. This machinery is a subtle combination of grounded perception, memory, causal models, attention, learning over time, and self-directed control. This offers a path to systems that don’t just chase metrics, but develop more general, resilient competence.

To understand how brain-inspired intelligence can translate into practical engineering solutions, let’s start with a primer on the levels of neuroscience. Then define cognitive architectures, break cognition into processes. We discuss how perception becomes representation followed by reviewing shortly major architectural families and canonical examples. We conclude with an engineer’s playbook for what to build next; showing why this systems-level approach is a compelling way to design more adaptive, explainable, and robust intelligent systems. The ordering matters. It treats intelligence less like a single model and more like a system design problem.

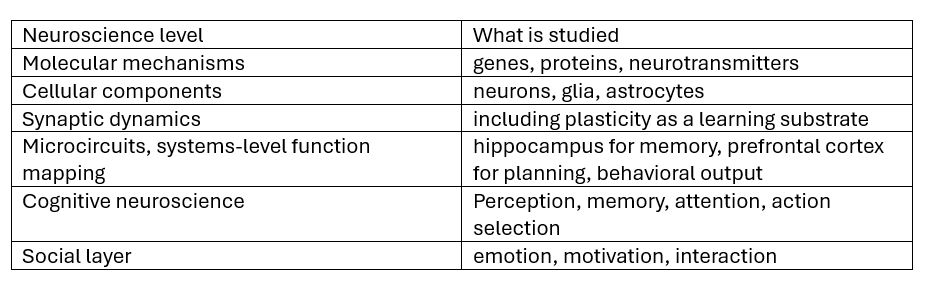

Granularizing neuroscience levels for implementation from an engineering lens

Neuroscience inspires at multiple levels. The selection of the level is largely dependent on the problem statement for the engineering endeavor. A coarse list of levels and a very short explanation is provided in the table.

Takeaway: Do not copy the brain. Avoid mixing abstractions.

Many disagreements about memory or reasoning are really disagreements about which level is being discussed.

A working definition: cognitive architecture

A cognitive architecture is framed as a computational framework that models core structures and processes of the brain to produce intelligent behavior.

The phrase intelligent behavior is deliberately central. The architecture is not the neuroscience; it is the engineered scaffold that makes cognition operational.

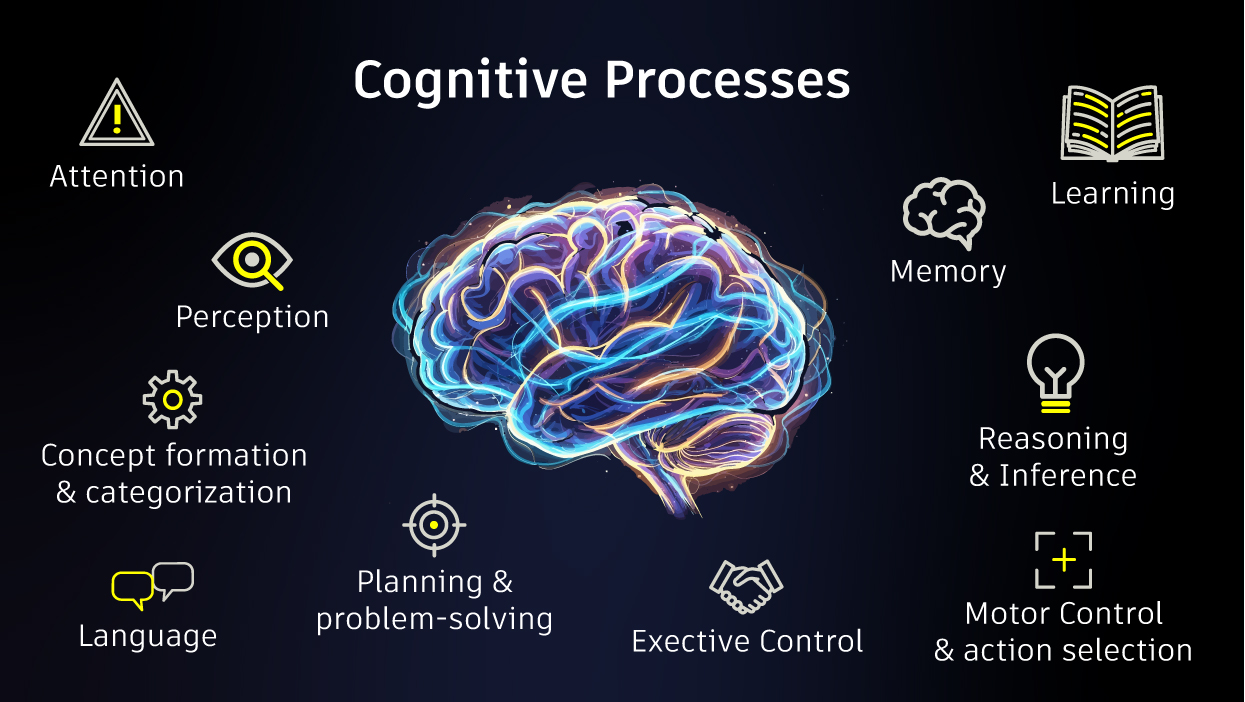

The figure gives us a list of the processes that integrate into overall cognition for a system. The memory, learning and reasoning would typically be of higher importance for any system to exhibit intelligent behaviour. A combination of these processes solve particular problems, including perception and attention defines what is worth noticing in the sensory space. Memory and learning are combined to understand the storage and computational requirements for a system. Reasoning and inference with planning can achieve simulation of what-if scenarios. There are many other combinations, but the requirements define which ones are relevant and how are they used.

Takeaway: Develop processes that are relevant to your use case.

Core structure begins with representation

Once processes are established, attention turns to the core structure that those processes run on to understand how data is represented internally.

Cortical maps serve as an accessible bridge between biology and engineering:

- Retinotopic maps (vision)

- Tonotopic maps (hearing)

- Somatosensory maps (touch)

These maps are a way to represent sensors (or sensor cells) onto a 2D/3D surface. In multi-sensor deployment scenarios, this informs spatial locations of sensory representation. A simplistic example is the data from a single sensor represented on a n-dimensional surface. A network of sensors, typical in IoT scenarios, represented as 2D/3D grid for attention localization This is explicitly noted as coarse level and used here for an engineer’s point of reference rather than neurobiological fidelity.

Takeaway: In practical terms: perception is not a front-end adapter or a preprocessing step. It is a representational pipeline that shapes everything downstream.

Cognitive architectural families: symbolic, emergent, hybrid

Three broad categories organize much of cognitive architecture theory:

- Symbolic: production rules, propositional logic (if this, then that)

- Emergent/connectionist: massively parallel, network-style models; neural approaches sit here (weights in neural networks)

- Hybrid: combines symbolic and connectionist approaches; symbolic rules don’t scale indefinitely and require someone to encode them, so hybrids tend to be pragmatic

Out of hundreds or architectures currently in existence, the following three architectures provide a point of reference, including the trade-offs they expose.

- Soar (State, Operator, and Result)

- Soar is presented as a general cognitive architecture for agents that solve problems, plan, learn, and act using production rules and decision cycles (input → decision → application → learning). When an impasse occurs, a main goal can be converted into a subgoal.

- Strengths include structured problem solving and interpretability; limitations are brittleness outside structured domains, rule explosion, and a key theme: perception misalignment (insufficient detail on converting raw sensor data into perception).

- ACT-R (Adaptive Control of Thought—Rational)

- This model is positioned closer to human cognition and behaviour by mapping cognitive functions onto brain regions, implemented as a modular architecture with modules, buffers, and production systems. It is described as hybrid (symbolic + sub-symbolic) with higher biological fidelity and human-like predictability.

- Its strength lies in its hybrid symbolic–sub-symbolic design, which offers higher biological fidelity and more human-like predictability. Limitations include knowledge bottlenecks, serial processing constraints, and lack of full cognition factors like emotions and fatigue.

- LIDA (Learning Intelligent Decision Agent)

- LIDA is introduced through Global Workspace Theory, treating consciousness as an attention spotlight that broadcasts a small set of relevant information to specialized systems so they can coordinate. It runs in cognitive cycles: perception → attention competition → broadcast → action selection → loop, with multi-level learning noted.

- Its strengths are process integration and attention-driven control; limitations include being less standardized, conceptually rich but hard to validate, and lower predictive power compared to SOAR/ACT-R in this framing.

Takeaway: Cognitive architectures are not new. They have potential to contribute to AI systems when engineered well.

Cognition already shows up in engineered systems

Two industrial examples ground cognition as closed-loop adaptation rather than philosophical metaphor.

Cognitive radio is described as dynamic spectrum access: spectrum is often allocated statically but underutilized across space and time. The system continuously perceives whether spectrum is in use in a spatiotemporal context and assigns unused spectrum to a user. For example, a phone switches to an unused TV channel when nearby licensed users are absent.

Cognitive radar is framed as adaptive sensing: if an object cannot be perceived well, internal models/parameters (frequency, waveforms, etc.) are altered to improve detection. For example, a radar changes waveform frequency to track a drone hidden in heavy rain.

These examples reflect how the perception-action loop leads to intelligent cognitive systems.

Cognitive architecture vs. cognitive AI

A clean distinction is drawn:

- Cognitive architecture: top-down, starting from a blueprint where pieces fit within an overall pipeline

- Cognitive AI: bottom-up, often focused on one cognitive process at a time (e.g., decision-making) without specifying how it communicates with the rest

Cognitive architecture also explicitly cares about a core structure for cognition; cognitive AI may use smaller structures optimized for the isolated process without an overall substrate.

Takeaway: The choice is use-case dependent.

Turning cognition into an engineering toolkit

The engineering translation is explicit and implementable:

- Closed-loop feedback control: perception → processing → output → feedback into perception

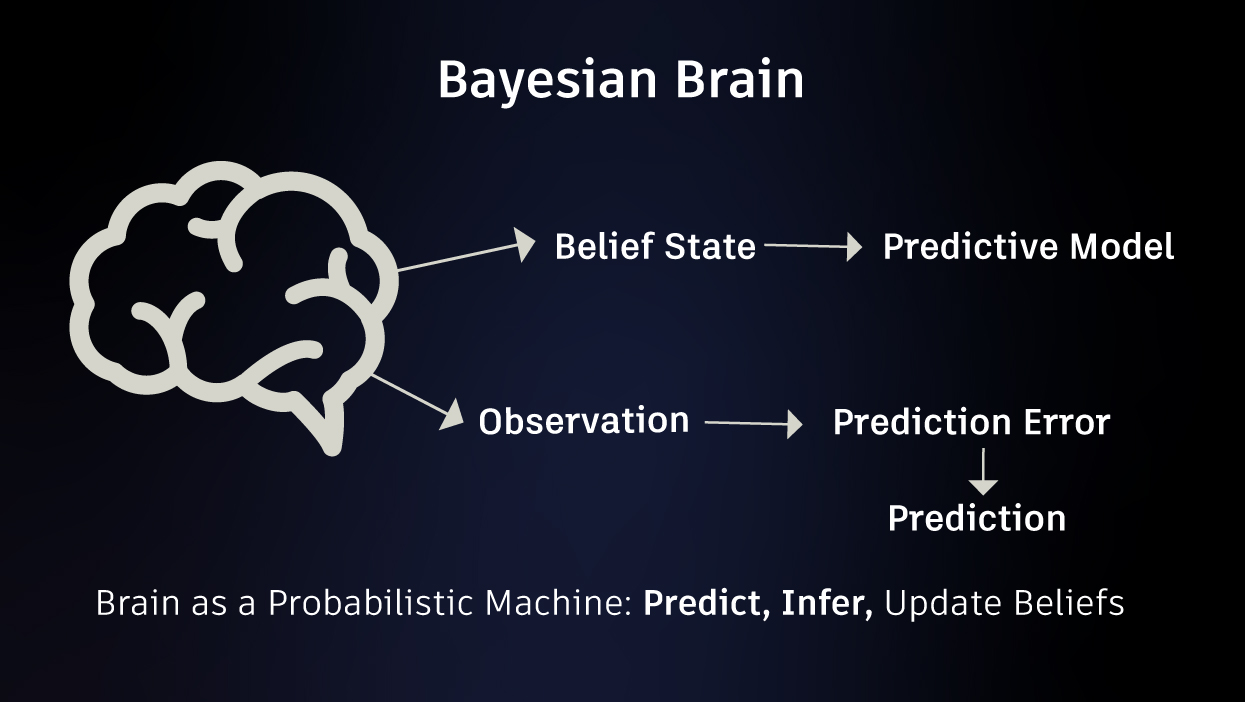

- State estimation + Bayesian inference: begin with assumptions about the environment; update internal models as evidence arrives; connect this to predictive coding / active inference and minimizing surprise

- Prediction + error correction: a practical extension of the above

- Modularity: modularize processes and design communication pathways between them to build efficient intelligent systems

- Self-stabilization / homeostasis: stability recovery after perturbation; framed in information-theoretic terms as minimizing surprise, and in energetic terms as minimizing energy expenditure to achieve tasks

Takeaway: Selectively learning from neuroscience and developing engineering solutions would potentially create the missing core substrate in today’s AI.

Getting started: memory, generalization and reasoning

Among the different processes that form cognition, we focus on three components for building a cognitive architecture. As discussed earlier – memory, learning and reasoning are of most interest to develop an intelligent system.

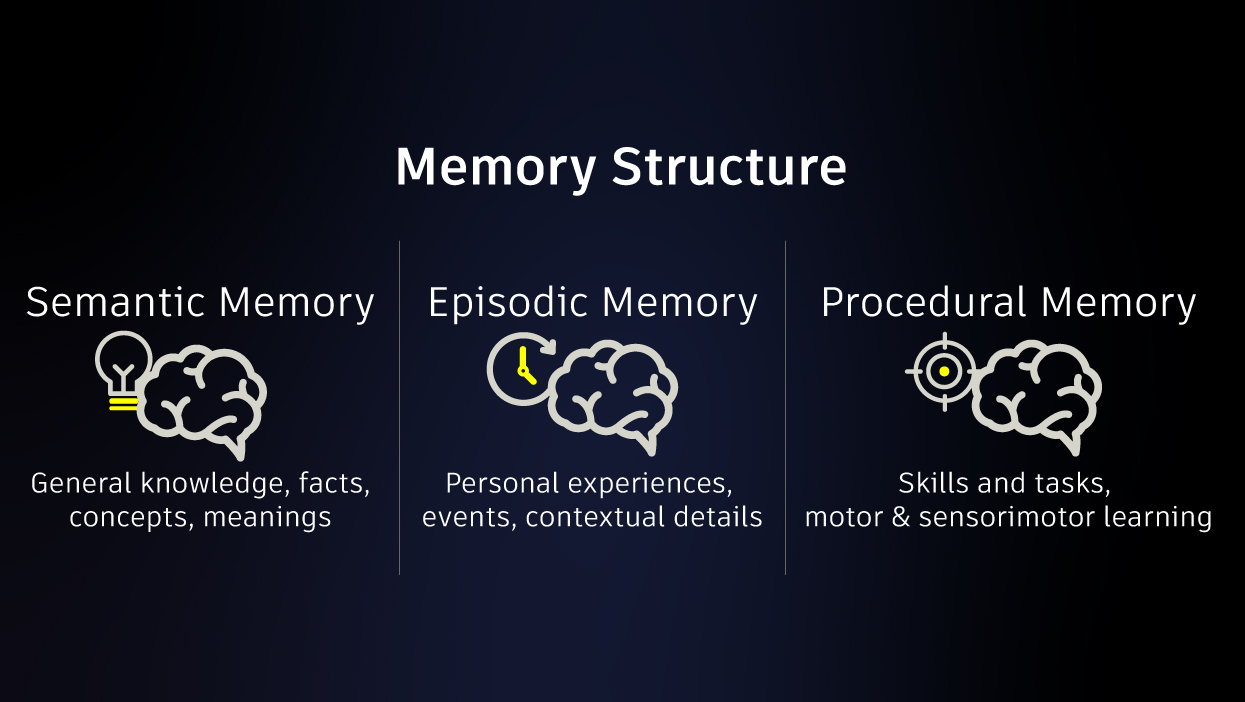

1) Memory structure

At minimum: semantic, episodic, and procedural memory modules.

Semantic memory stores facts that don’t change with context; episodic memory stores events as episodes and supports generalization into semantic memory; procedural memory stores stepwise processes.

Interactions and overlap between these systems are expected.

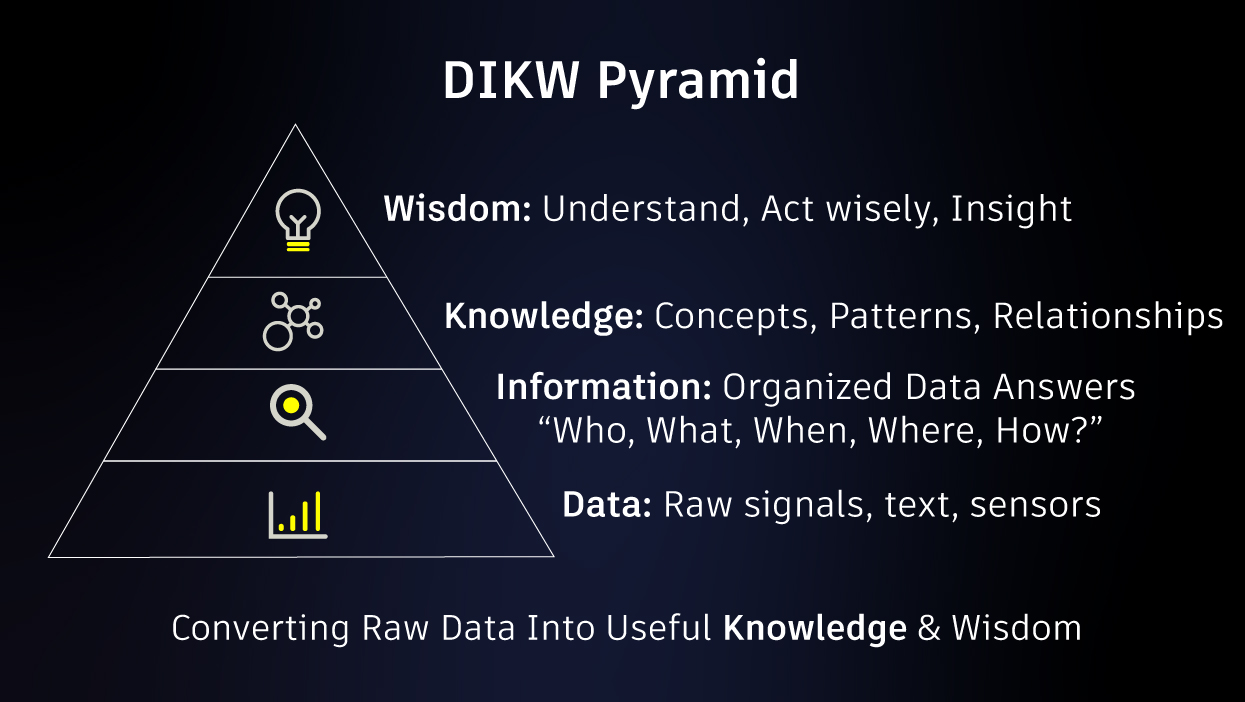

2) Data-Information-Knowledge-Wisdom (DIKW) pyramid

DIKW is used as a generalization/specialization lens: raw sensor signals (data) become information via processing, then knowledge via patterns/concepts/relationships, and wisdom when applied to use cases. This is representative of the learning process widely used in AI.

The implementation details in the brain are acknowledged as lacking consensus, but the framing is retained for engineering utility.

3) Bayesian brain (as a computational lens)

The operational loop is presented as expectations vs. evidence → comparison → corrective action, with a vocabulary of priors (existing knowledge), beliefs (what is thought to be true), and evidence (gathered via senses). This is representative of reasoning and inferencing processes of cognition.

A caveat is embedded: neuroscientists may argue the Bayesian brain is a myth, but the goal here is to use the computational concepts for engineered systems that exhibit intelligent behavior.

Takeaway: Getting started with memory structure, generalization (learning over multiple instances) and reasoning (inferencing) potentially leads to a minimalist implementation.

The architectural gameplan

This blueprint advocates the following thought process: intelligent behavior comes from closed-loop coordination among perception, representation, memory, inference, and action; not from a single model in isolation. That view naturally elevates perception and representation to first-class design decisions, and it motivates a perception-first architecture where representation feeding to memory provide a structured substrate for continual learning and intelligent behavior. Pivoting to a more brain-inspired architecture can potentially go a long way in developing intelligent solutions for engineering problems and engineers gain techniques that are more transparent, explainable and deterministic as compared to existing block box approaches. Focusing on memory, learning and reasoning as core tenets, the bio-/neuro-inspiration will influence the development of new age intelligent systems.

Nikhil Dhamne is a Cognitive Engineering Architect intern at Autodesk Research. He is currently doing his PhD on Neuro-plausible Cognitive Architecture for Prognostics and Health Management at the University of the West of England (UK).

Get in touch

Have we piqued your interest? Get in touch if you’d like to learn more about Autodesk Research, our projects, people, and potential collaboration opportunities

Contact us